Because read operations are mapped to snapshot isolation transaction level, the cleanup of ghost records on the primary replica can be blocked by transactions on one or more secondary replicas. The ghost record cleanup task will automatically clean up the ghost records for disk-based tables on the primary replica when they are no longer needed by any secondary replica. This is similar to what is done when you run transaction(s) on the primary replica. In the extreme case on the secondary database, you will need to kill a long running read-query that is blocking the ghost cleanup. Note, the ghost clean can be blocked if the secondary replica gets disconnected or when data movement is suspended on the secondary database. This state also prevents log truncation, so if this state persists, we recommend that you remove this secondary database from the availability group.

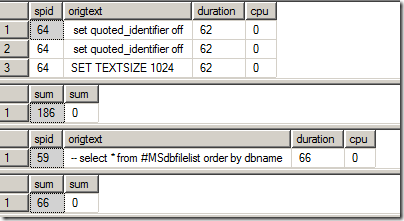

The above is a snippet from the official Microsoft documentation for Availability Group Secondary Replicas under the limitations and restrictions section.

So a transaction on a secondary replica can block an operation on a primary replica… Hmm.. Now that smells like a mystery!

Before I go further, let me explain what Ghost Cleanup does. Let me give you the official text from the Books Online.

Deletes operations from a table or update operations that cause a row to move can immediately free up space on a page by removing references to the row. However, under certain circumstances, the row can physically remain on the data page as a ghost record. Ghost records are periodically removed by a background process. This residual data is not returned by the Database Engine in response to queries.

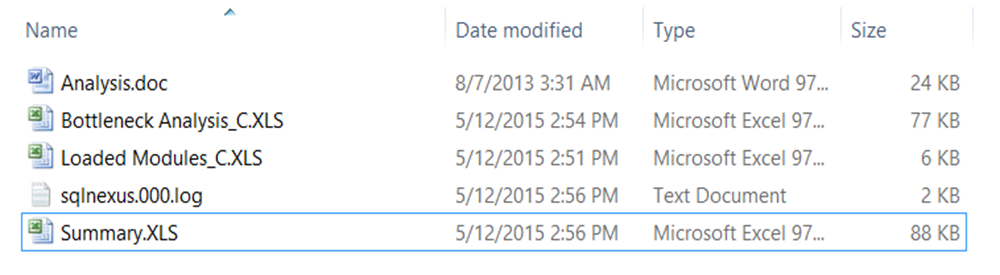

I had some free time a while back and decided to actually track this down to show how the Ghost Cleanup actually works in an availability group replica. My availability group setup was a simple one which had two SQL Server instances sitting across two different subnets as shown in Screenshot 1.

So, let’s get the show on the road and let me walk you through walk you the through the ghost cleanup behavior on the secondary replica.

The DML

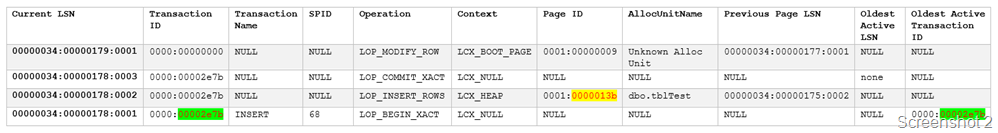

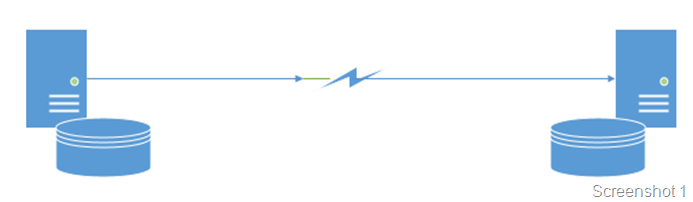

On my existing Availability Group setup, I inserted a single row in a table of a primary replica database with the value of 3. The logged operations show up as follows in the SQL Server transaction log.. Psst.. Don’t tell anyone that I was reading the log file

As you can see from the green highlight above the Transaction ID is 11899 (0x2e7b) which inserted a row on Page ID 315 (ox13b).

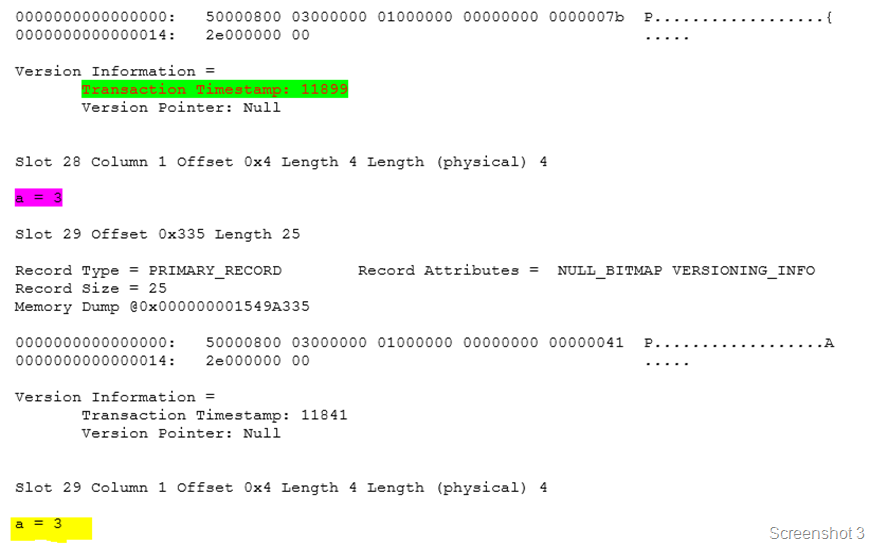

What was on the page

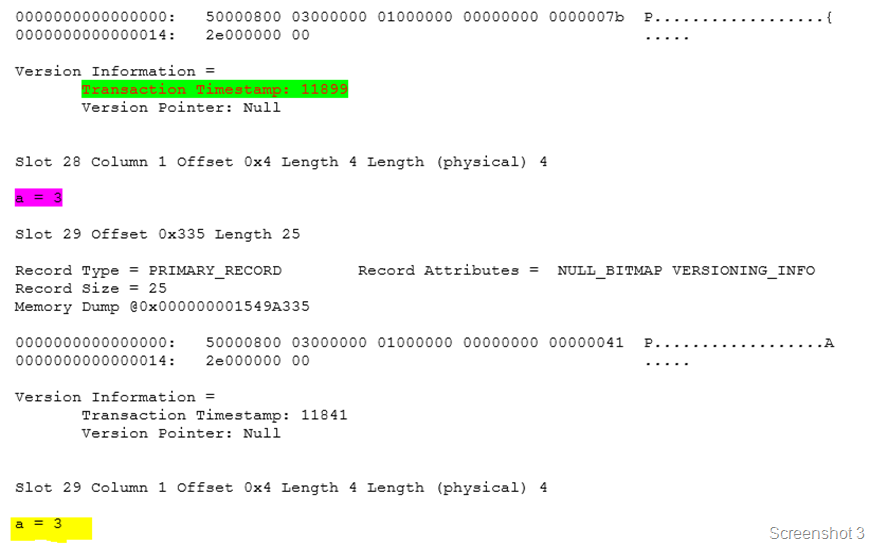

I verified that the page on the primary replica database had the new entry that I had added into the heap using the Page ID retrieved from the transaction log. Note that we are keeping track of the oldest active transaction as well. The record shows the transaction timestamp which was responsible for the DML operation 11899 (0x2e7b). From Screenshot 2 below, you can see that the version information is maintained and the transaction timestamp shows up correctly (green highlight). The value also shows up correctly (pink highlight).

What happened after that?

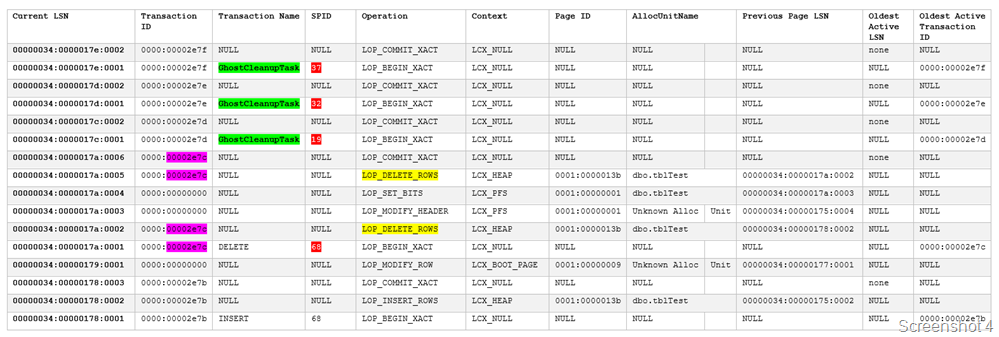

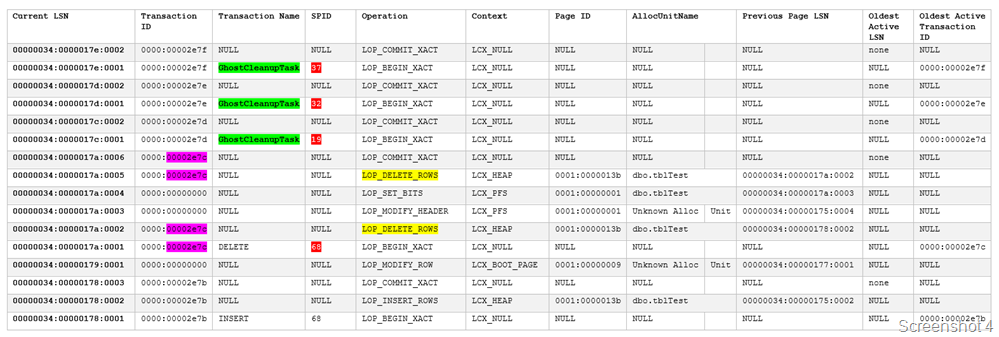

Then I started a transaction on the secondary replica and executed a SELECT query on the same table with a HOLDLOCK hint to keep the row lock. Then I proceeded to delete both the rows with value 3 in the primary replica. I verified that the rows were not retrieved by a SELECT query on the primary and secondary replica. The transaction log dump from secondary replica shows that the changes were replayed.

Oops! I had to read the transaction log again.

From the green highlights in Screenshot 4, you can see that the GhostCleanupTask transaction ran on the secondary replica. The pink highlights shows that the transaction ID 11900 (0x2e7c) deleted two rows from the Page ID 315 (ox13b). So all is good now.

Curiosity killed the cat!

Well curiosity got the better of me and I decided to check if the same the story was being told inside the transaction log of the primary replica database. And this is where David Duchovny’s a.k.a. Agent Moulder and Gillian Anderson a.k.a. Agent Scully’s faces from the X-Files will be an apt representation of what I present next.

Well curiosity got the better of me and I decided to check if the same the story was being told inside the transaction log of the primary replica database. And this is where David Duchovny’s a.k.a. Agent Moulder and Gillian Anderson a.k.a. Agent Scully’s faces from the X-Files will be an apt representation of what I present next.

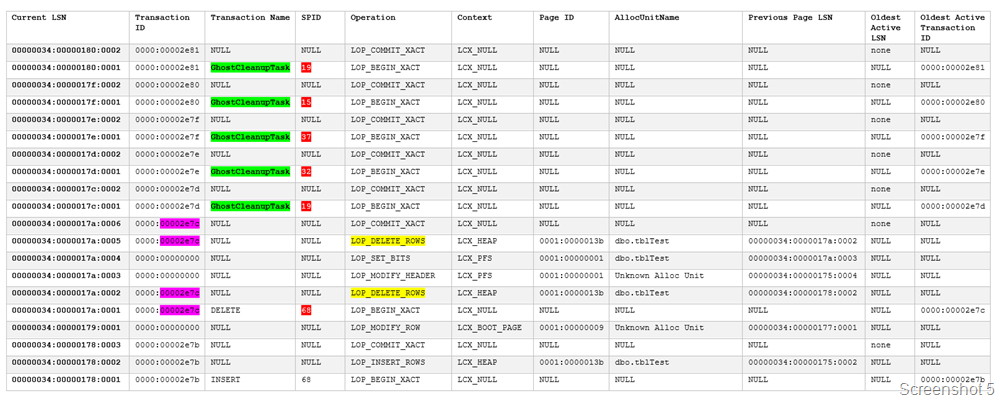

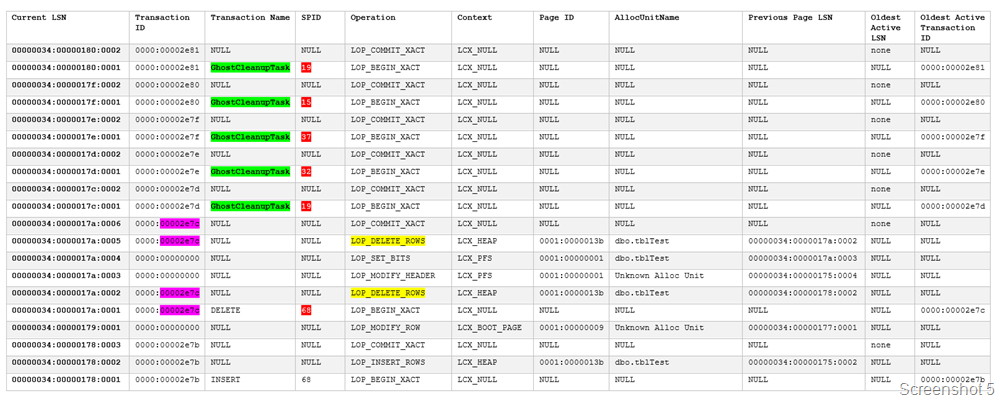

Screenshot 5 will show that the Ghost Cleanup Task continues to execute on the Primary Replica Database! What now executed? Did we not delete the rows and verify everything was alright…

The first observation is that the transaction log is being replayed to the letter on the secondary replica. Notice that the transaction IDs of the Ghost Cleanup Task correspond with the transaction IDs of the Ghost Cleanup Task found on the secondary replica instance database. It wasn’t a joke when the documentation said that transactions are replayed on the secondary replica!

The yellow highlights show that the rows were deleted from the table that I had performed the delete on. The pink highlights confirm that the same transaction was associated with both the deletes.

I had verified that there were no ghost records in the database when I started the repro. So, the important question was:

Why Ghost Cleanup Task was running repeatedly on the primary replica database?

What was really happening: THE EXPLANATION

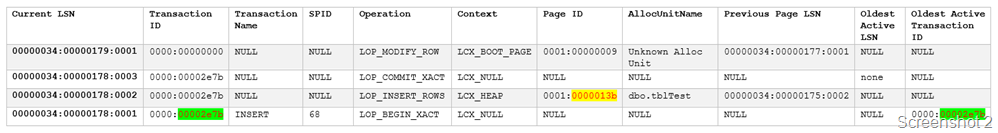

Since I had an open transaction on the secondary replica database, I had an active version store! Screenshot 6 shows that my active version store on the secondary replica with a transaction sequence number of 11900 (0x2e7c) which matches the transaction ID of the delete operation. This active version stored was created on the delete operation because I had performed a SELECT with a HOLDLOCK earlier as mentioned in this post on the table from the secondary replica.

Then I created a table on the primary replica database and inserted a row in it. I checked if this data was available on the secondary replica and it was!

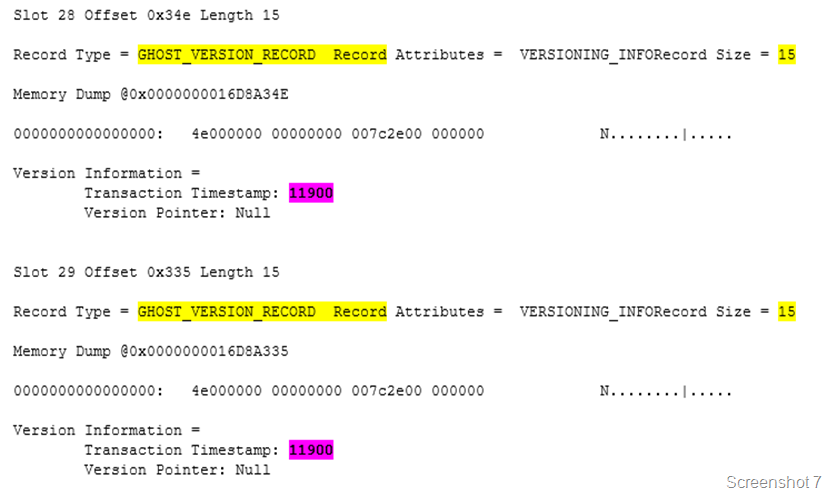

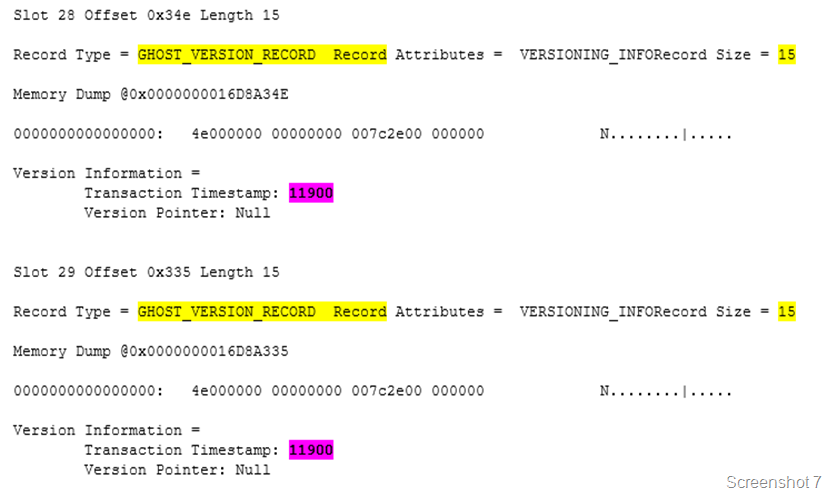

I found that on the primary replica, the database page shows ghost version records (Screenshot 7). The transaction timestamp matches the transaction ID which performed the delete operation i.e. transaction ID 11900 (0x2e7c).

Light at the end of the tunnel

Once transaction which I had stated on the secondary replica with the HOLDLOCK hint was committed, the ghost cleanup task was able to perform the cleanup on the primary replica’s page. Once this was completed successfully, the ghost records on the secondary replica were cleaned up promptly as well.

The above behavior is true for both synchronous and asynchronous modes of operation.

I hope this was a fun Friday read! Have a good weekend!

This month’s T-SQL Tuesday is being hosted by Jes Borland (

This month’s T-SQL Tuesday is being hosted by Jes Borland (