If you are looking to upgrade your SQL Server replication topology, then you can look at the post that I published on the Tiger blog.

Tag Archives: Replication

MaxBCPThreads not causing parallel export

I had recently run into an issue where the MaxBCPThreads value was set to a non-zero value and the Snapshot Agent was still not creating multiple snapshot files for the table in question which was over 70GB in size.

On further inspection, I did find that the issue was with the statistics distribution of the table in question was affecting the parallel file export by the snapshot agent. It has already been documented that the concurrent snapshot generation option prevents the use of parallel BCP threads by the Distribution Agent. This is documented on the ReplTalk blog on MSDN.

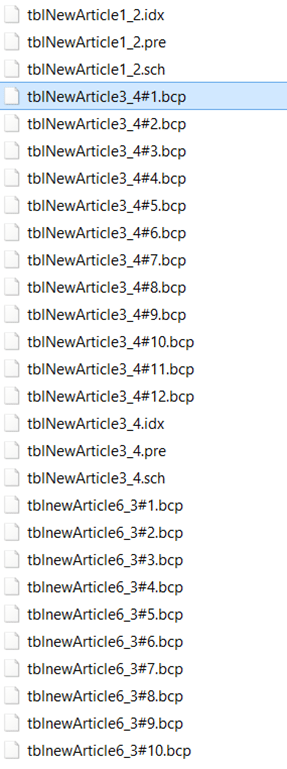

Now to find out why the parallel threads were not being generated. So I created a repro of the situation on my side with a transaction replication publication having three tables with the same schema options. The snapshot folder after the snapshot agent’s execution is as shown in the screenshot.

Now to find out why the parallel threads were not being generated. So I created a repro of the situation on my side with a transaction replication publication having three tables with the same schema options. The snapshot folder after the snapshot agent’s execution is as shown in the screenshot.

The tblNewArticle6 has 60398 rows, tblNewArticle3 has 100,000 rows and the tblNewArticle1 has 2 rows.

The MaxBCPThreads configured value for the snapshot agent is 6. Surprisingly, tblNewArticle6 has 12 BCP files and the tblNewArticle3 has only one BCP file.

Looking into a profiler trace and the verbose log for the Snapshot Agent, I found out the following information:

1. There is a thread which performs an exploration on what ranges the parallel BCP threads will be fetching. The application name in the profiler trace will show up as “Auxiliary bcp load ordering hint and partitioning resolution thread # for publication <publication name>”. See Screenshot 2 for more details.

2. You will also find dynamic SQL commands executing DBCC SHOW_STATISTICS against the tables in question. ![]()

In my case, tblNewArticle6 has a data distribution graph as shown in screenshot 3. It is clear from the screenshot that the tblNewArticle6 has multiple ranges available for the index id = 1. These were utilized by the Snapshot agent to generate 12 BCP files.

On looking into the Snapshot agent verbose log, I find find the following

Partitioning where clauses for article ‘tblnewArticle6’:

([SalesOrderNumber] is null) or ([SalesOrderNumber] <= convert(nvarchar(7), 0x53004F0035003000380034003400) collate SQL_Latin1_General_CP1_CI_AS)

([SalesOrderNumber] > convert(nvarchar(7), 0x53004F0035003000380034003400) collate SQL_Latin1_General_CP1_CI_AS) and ([SalesOrderNumber] <= convert(nvarchar(7), 0x53004F0035003300310039003500) collate SQL_Latin1_General_CP1_CI_AS)

…

…

([SalesOrderNumber] > convert(nvarchar(7), 0x53004F0037003300300037003600) collate SQL_Latin1_General_CP1_CI_AS)

The above ranges translated to 12 ranges which is the same number of files that I see in my snapshot folder.

Now looking into tblNewArticle3 which has 100,000 rows, I have a DBCC SHOW_STATISTICS output which only showed me two distinct ranges. Another point to note was that the primary key of the table has a non-clustered index defined on it and the clustered index key is a non primary key column. On increasing the number of rows in the table, I still find that the number of rows in the table, tblNewArticle3, I still found that the number of BCP files that were being generated was only 1. Then on additional investigation, I found that there is a small requirement of the clustered index to contain the primary key columns for the published article as well. This is evaluated in one of the system stored procedures for replication.

Some deep diving into the profiler traces, snapshot agent logs and debugging the stored procedures will help you arrive at the following conclusion:

Multiple BCP files will be created by the Snapshot Agent only if the following are true:

1. There are multiple distinct ranges available in the clustered index’s statistics histogram to create partitions which can be used by the BCP program to extract the data in parallel.

2. The clustered index must be defined on the primary key columns.

3. MaxBCPThreads value is set to a value other than 1.

If the above is true, then you will see multiple BCP files created by the Snapshot agent for the table of interest. Note that the above information is simplified to ensure that I comply with my NDA. After making the above changes, I see the following output in the repldata folder shown below in the screenshot:

Additional Reference:

Improving snapshot performance using MaxBCPThreads

Enabling Transactional Replication: A bit of help

Over the past few months, I have discussed the feasibility of enabling transaction replication for customer databases on various occasions. Every time I end up writing queries to answer certain questions about the database… the most common one being if the tables that need to be replicated have primary keys.

So I finally decided to write a T-SQL script which will help me answer the most common questions asked about a database while deciding on the feasibility of enabling transaction replication.

The script doesn’t capture information like workload, performance metrics etc. to decide if the replication workload (snapshot and distribution agent) can be supported on the existing hardware and resources available in the environment.

My take on the matter is that this information is required only once we have figured out if transactional replication can be enabled on the database or not. Eg. If the main tables that need to be replicated do not have primary keys, then the question of resource availability and hardware capability is moot point!

The script below checks the following:

1. Existing of primary keys on the tables in the database. Objects (articles) without primary keys cannot be replicated as part of a transactional replication publication.

2. If the database has transparent database encryption enabled. The subscriber database is not automatically enabled for TDE in such a scenario.

3. Constraints, primary keys, triggers and identify columns which have NOT FOR REPLICATION bit set and which objects do not. You might choose to replicate or not replicate some of these objects. However, you need to be aware of what you are replicating.

4. Tables having ntext, text and image columns as there are special considerations for handling DMLs on such columns.

5. XML schema collections present in the database. Modifications to the XML Schema collection are not replicated.

6. Tables with sparse column sets as they cannot be replicated.

7. Objects created using WITH ENCRYPTION option. Such objects cannot be replicated either.

As always, in case you think that there are additional checks that could be included in the script, then please leave a comment on my blog and I will add the same into the script.

Script to remove a constraint and drop columns

Recently there was a need to get rid of a replication column after replication was disabled on a SQL Server 2000 database. The customer wanted to get rid of a column for all the tables which replication had added. And the flip side was that this column had a constraint defined on it. I looked at the constraint names and found that they had a common sub-string “msrepl_tran”. So by joining the system catalogs syscontraints and syscolumns, we were able to create the necessary T-SQL scripts to drop the constraint and the column from the tables involved.

select 'ALTER TABLE [' + OBJECT_NAME(a.id) + '] drop constraint [' + OBJECT_NAME(a.constid) + ']' + CHAR(13) + 'alter table [' + OBJECT_NAME(a.id) + '] drop column ['+ b.name + ']' + CHAR(13) + 'go' from sysconstraints a inner join syscolumns b on a.id = b.id where OBJECT_NAME(a.id) like '%msrepl_tran%'

NOTE: Use CTRL+T and F5 to generate the script above. Inspect the output to ensure that no unintended table gets affected.

Replication Agent has not logged a message in 10 minutes

I saw multiple posts on MSDN regarding the following message:

“The replication agent has not logged a progress message in 10 minutes. This might indicate an unresponsive agent or high system activity.”

More often than not the above message can be ignored safely. The next obvious question is when can this message be ignored. If you find that your replication agent history is not reporting any error messages, then the above message is benign. Typically, this message means the agent is busy doing its work and has no resources to respond to status inquiries.

Why does this error message get generated?

This error message gets generated because of the Distribution heartbeat interval property. This property governs how long an agent can run without logging a progress message. If your replication agents are not reporting an error message and you are seeing the above message, then you could change your heartbeat interval to a higher value. One of the option could be that you changed the history logging option for your replication agent so that it doesn’t log any message.

Query:

exec sp_changedistributor_property @property = 'heartbeat_interval', @value = <value in minutes>;

Reference: sp_changedistributor_property (Transact-SQL)

I have read lots of opinions as to why this is a bug and if you subscribe to that school of thought, please create a Connect item at http://connect.microsoft.com/sqlserver.